That Is Now a Board Problem.

By Hindol Datta, CPA | Fractional CFO Trustmodel. AI | AI Governance Advisor

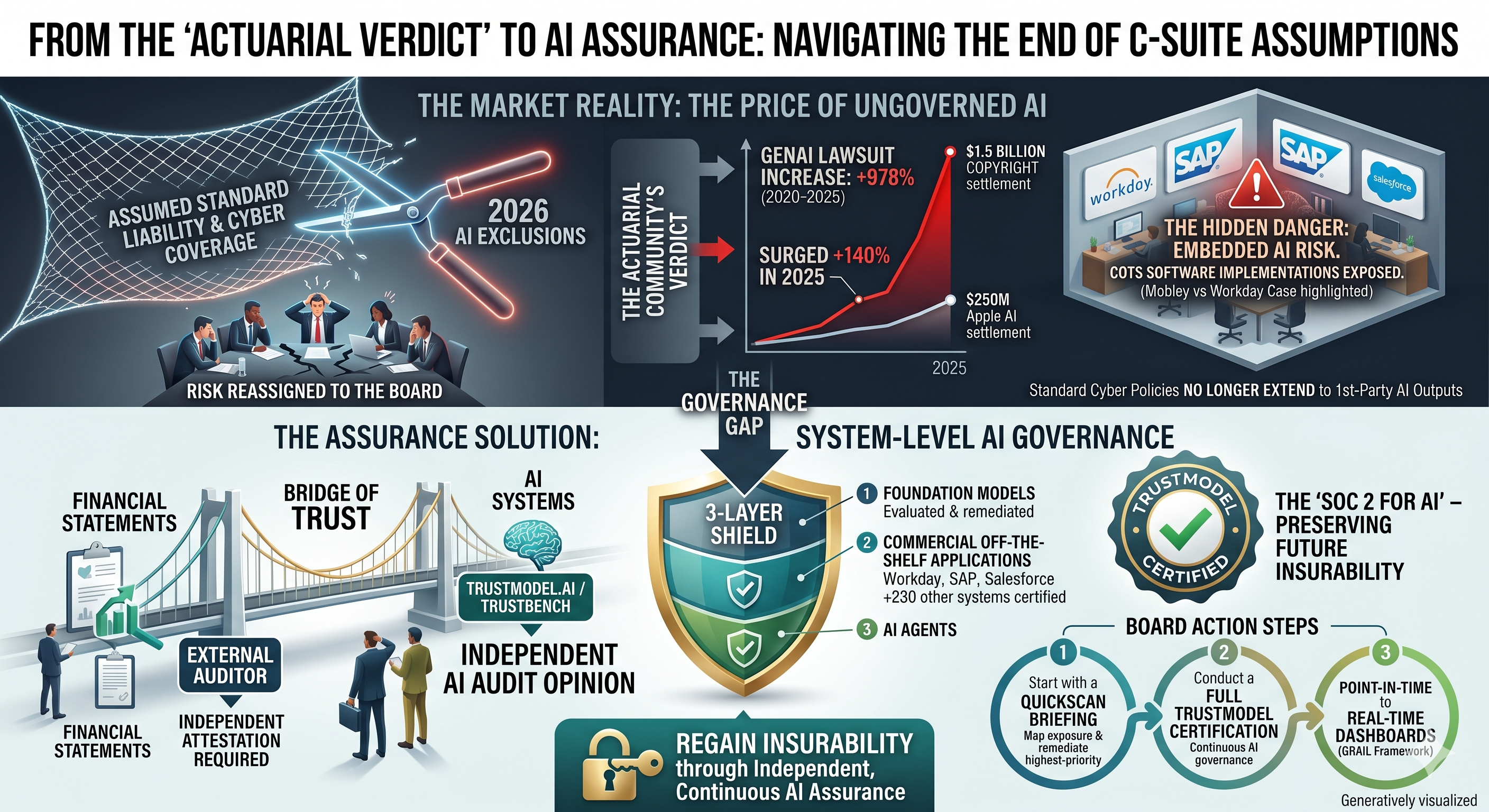

When the Market Prices What the Board Has Not

The insurance industry does not act on sentiment. It acts on mathematics. When Berkshire Hathaway, Chubb, and Travelers sought and received state regulatory approval to exclude AI-related damages from their standard corporate liability policies, and when state regulators approved more than 80 percent of those requests, the actuarial community delivered a verdict that no board presentation, consultant report, or internal AI task force had yet managed to articulate with equivalent precision: the risk of enterprise AI deployment has been formally measured, and it is large enough that the most sophisticated risk-pricing institutions in the world have declined to own it on your behalf.

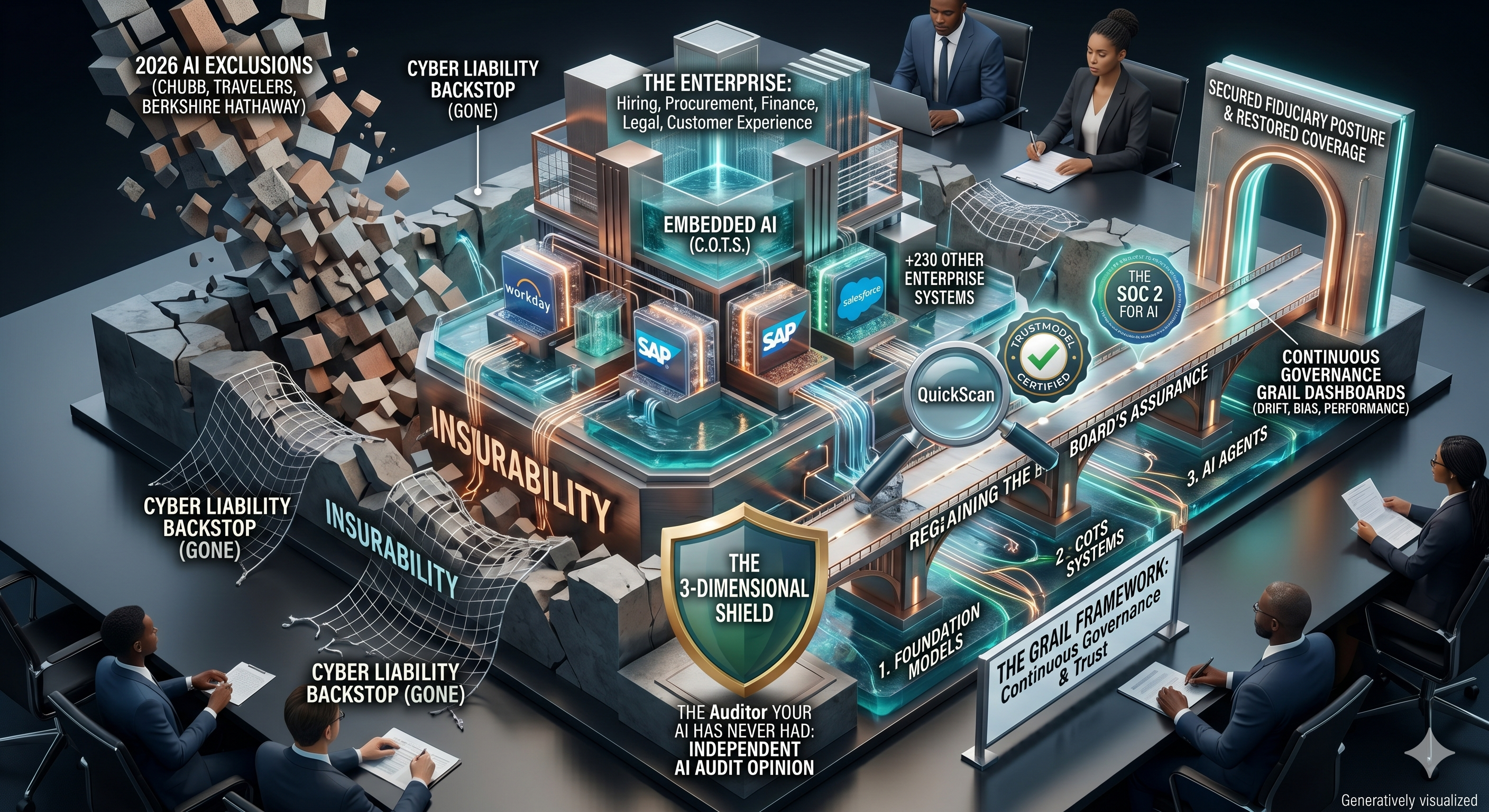

These exclusions are not provisional or experimental. They began taking effect as of January 2026, and the major brokerages, including Aon, Gallagher, and Lockton, have all publicly warned their corporate clients that coverage previously assumed to exist may simply be gone. The endorsements cover a wide and consequential range of scenarios: bodily injury and property damage traceable to AI-driven errors, defamatory content generated by AI agents in marketing or communications workflows, and intellectual property infringement arising from AI-generated outputs. Crucially, standard cyber insurance policies, which many CFOs and General Counsel have historically treated as a backstop for technology-related liability, generally do not extend to first-party liabilities arising from a company’s own AI outputs. The fallback that most leadership teams assumed they had is quietly being removed beneath them.

The litigation environment makes the timing of these exclusions especially consequential. A Gallagher Re and MIT report released in April 2026 documented a 978 percent increase in generative AI-related lawsuits between 2020 and 2025, with AI-related litigation surging a further 140 percent in 2025 alone. The theoretical risk that boards have been deferring to management, to legal teams, and to multiple levels of organizational hierarchy below the boardroom has become an active and rapidly expanding body of case law, and the insurance industry has repriced accordingly.

The insurance exclusions are the market’s way of pricing what boards have failed to govern.

The Fiduciary Stakes Have Become Personal

It is worth pausing on what the insurance withdrawal actually means in governance terms, because the instinct of most boards will be to treat it as a procurement problem, a conversation to be routed to the risk committee or delegated to the General Counsel’s office. That instinct will prove costly. When Berkshire Hathaway and Travelers exit a risk category, they are not making an operational suggestion. They are reassigning ownership of that risk to the entity that originally deployed the AI. In a corporate governance context, that entity is the board.

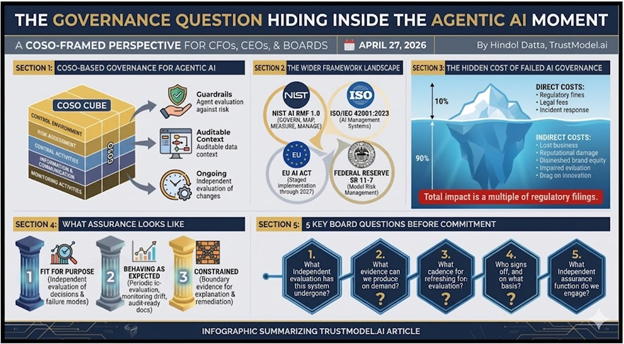

The legal landscape is reinforcing this reassignment with considerable force. Delaware fiduciary standards, which represent the most widely applicable framework for director duty of care in the United States, are beginning to be read in the context of AI decision-making. The European Union’s AI Act, which carries extraterritorial reach for companies with European operations or customers, assigns explicit accountability obligations to deployers of AI systems, not merely to the companies that built them. Directors who approved AI deployment without requiring independent assurance of system trustworthiness are exposed to a line of argument that has not yet been fully tested in court but is advancing rapidly through the regulatory and litigation pipeline.

The dollar exposure attached to this argument is no longer speculative. The largest copyright settlement in United States history was $1.5 billion, paid to resolve claims that an AI company trained its models on copyrighted works. Apple settled a separate AI misrepresentation lawsuit this week for $250 million, covering roughly 37 million devices sold to consumers on the basis of AI capabilities that did not exist at the time of sale. Neither of these cases involved a company that was reckless or indifferent to legal risk. Both involved organizations with sophisticated legal and compliance functions that nonetheless found themselves on the wrong side of AI-related liability at scale. The question before every board is not whether their AI systems are well-intentioned but whether they are independently verified to be trustworthy, and whether that verification is documented in a form that will satisfy a regulator, an underwriter, or a plaintiff’s counsel.

The Risk Hidden Inside Systems You Already Own

There is a dimension of this problem that receives insufficient attention in most board-level AI governance discussions: not the bespoke AI systems that companies build or commission, but the commercial off-the-shelf software that enterprises have been deploying for years without ever thinking of it as AI at all. Workday, SAP, and Salesforce, among more than 230 enterprise systems now assessed by independent AI assurance platforms, have embedded AI deeply into workflows that govern hiring decisions, financial forecasting, procurement approvals, and customer relationship management. Most of the organizations using these systems did not purchase them with the understanding that they were acquiring AI. They purchased them for their functionality, and the AI arrived as an embedded feature, often activated by default.

The legal consequences of this quiet embedding are now visible and severe. In the matter of Mobley versus Workday, a federal court in the Northern District of California certified a nationwide collective action in 2025 on claims that Workday’s AI-based applicant screening system produced discriminatory outcomes for job applicants over the age of forty, as well as on the basis of race and disability. Workday itself represented in court filings that 1.1 billion job applications were rejected using its software tools during the relevant period, creating a collective action that could potentially encompass hundreds of millions of members. In March 2026, a federal judge allowed the age discrimination claims under the Age Discrimination in Employment Act to move forward, and the court has made clear that employers who enabled Workday’s AI features are themselves exposed, facing potential discrimination allegations and document subpoenas reaching back to September 24, 2020.

The board member who believes their organization’s Workday implementation is a vendor matter rather than a governance matter should consider that conclusion in light of the court’s ruling. The employer who activated the feature, who received the AI-generated scores, and who made or ratified hiring decisions on the basis of those scores, is the next defendant in line. The vendor’s AI is running inside the enterprise. The enterprise’s board is responsible for what runs inside the enterprise.

This is the distinction between non-systemic and systemic AI risk, and it is the distinction that most governance frameworks have not yet been designed to address. Non-systemic risk presents itself at the level of a single model, a single workflow, a single AI agent that misuses copyrighted content in a marketing campaign or generates a hallucinated contract term in a vendor negotiation. It is damaging and expensive, but it is ultimately containable. Systemic risk is of an entirely different character. It is the condition in which AI-enhanced decision-making has spread across multiple enterprise systems simultaneously, across hiring, procurement, credit, customer service, and financial planning, with no independent assessment of any of them, and with the legal and reputational consequences of a failure in any one system cascading across the others. Chubb, notably, has structured its remaining AI coverage to exclude losses that occur across multiple clients simultaneously, which is precisely the architecture of systemic failure. The insurer has named the risk it refuses to own. The board must now own it.

Systemic AI risk is the condition in which AI-enhanced decision-making has spread across the entire enterprise with no independent assessment of any of it.

The Auditor Your AI Has Never Had

Every board in the world understands one governance model without requiring explanation: the external auditor. The external auditor exists because markets, regulators, and investors long ago determined that management cannot reliably self-certify the integrity of financial statements. Independence is not a courtesy. It is a structural requirement because the entity being assessed has an inherent interest in the outcome. The audit opinion that matters is the one signed by a firm with no stake in the result.

The same logic applies to AI systems with even greater force, and yet almost no board has ever required an independent AI audit. Internal IT teams assess their own implementations. Legal counsel reviews vendor contracts. Compliance functions map against regulatory checklists. Each of these activities has genuine value, but none constitutes independent attestation of an AI system’s trustworthiness, nor does it produce the kind of documented, defensible evidence that an underwriter, a regulator, or a plaintiff’s attorney will find credible. The gap between what boards require for financial statements and for AI systems is not a minor oversight. It is the governance failure that the insurance exclusions are now pricing.

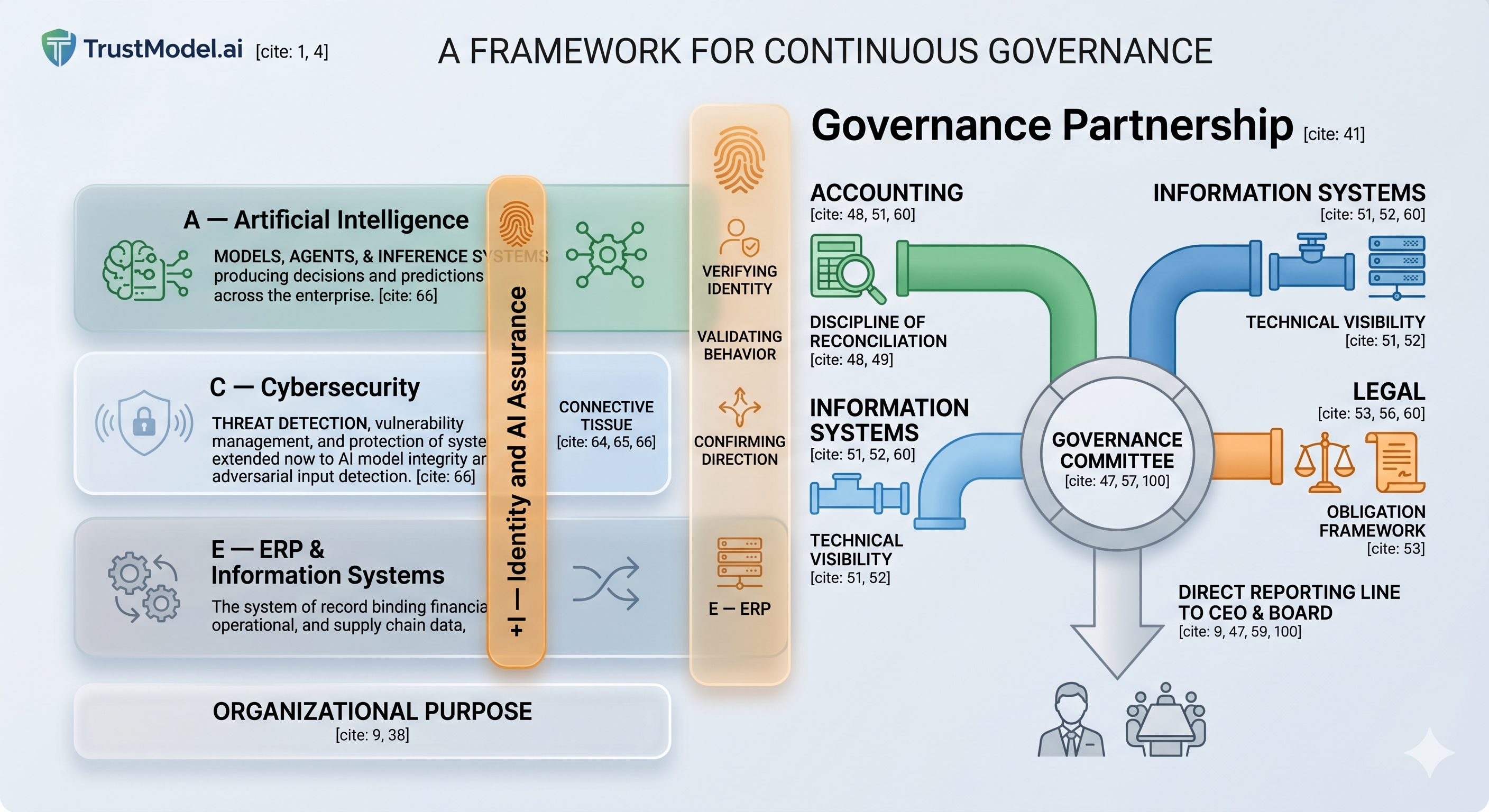

TrustModel.ai was built to close this gap, and its architecture reflects a precise understanding of where enterprise AI risk actually lives. The platform functions as an independent AI assurance provider, evaluating, remediating, and certifying AI systems across 3 distinct layers: foundation models; commercial off-the-shelf applications, including Workday, SAP, Salesforce, and more than 230 other enterprise systems; and AI agents. The evaluation engine, TrustBench, scores each system across 10 dimensions, generating a documented assessment that maps directly to the major regulatory frameworks, including the European Union AI Act, the NIST AI Risk Management Framework, New York City Local Law 144, HIPAA, and EEOC requirements. The output of a TrustBench assessment is not a consultant’s summary or an internal review. It is an independent audit opinion, expressed as a TrustModel Certified designation, produced by a platform backed by Stanford University’s StartX accelerator and available on Google Cloud Marketplace with one-click integration into existing enterprise systems.

The SOC 2 certification standard offers the most instructive precedent for understanding what TrustModel represents to this market. When cyber insurance emerged as a meaningful product category in the 2010s, underwriters did not simply begin writing policies against cyber risk. They required evidence that the organizations seeking coverage had implemented independently verified security controls. SOC 2 certification did not merely reduce cyber insurance premiums for the companies that obtained it. It made those companies insurable in a market that would otherwise have declined to insure the risk entirely. TrustModel Certified occupies the same structural position in the emerging AI insurance market. As standalone AI liability coverage develops to fill the gap left by the withdrawal of standard carriers, underwriters will require documented, independent evidence of AI system trustworthiness before agreeing to price the risk at all. The TrustBench assessment is that evidence. The board that commissions it now is not merely managing risk. It is preserving the organization’s future insurability.

A Role for Systems Integrators and Internal Audit

The governance conversation that TrustModel enables is not one that boards must navigate without experienced guidance. Systems integrators providing assurance services to enterprise clients are positioned to deploy TrustModel as a structured, third-party AI assessment within existing engagement frameworks, delivering to their clients the documented, independent evaluation that boards increasingly require and that regulators are beginning to mandate. Internal audit functions, which have spent years building expertise in enterprise risk assessment and which already carry the organizational credibility to bring findings directly to audit committees, can integrate TrustBench into their annual risk programs, treating AI system assurance with the same rigor and independence that financial controls have long received.

This is precisely the pathway through which SOC 2 became a mainstream governance standard. The Big 4 and the major mid-tier audit firms did not wait for a regulatory mandate before building SOC 2 practices. They recognized that their enterprise clients faced a governance gap, that the gap carried significant liability, and that the audit profession was structurally positioned to fill it. The firms that moved first built practices that generated substantial revenue and client trust for years before the rest of the market caught up. The AI assurance gap is structurally identical. The liability is greater, the regulatory pressure is more acute, and the window for early-mover advantage is now open.

The Conversation Your Board Needs to Have

The boards, CFOs, chief executives, and General Counsel reading this piece are almost certainly operating organizations that have deployed AI, activated AI features within commercial off-the-shelf systems, and have not yet commissioned an independent assessment of any of it. The insurance market has already rendered a judgment on the risk profile of that posture. The litigation statistics document what happens when that posture is challenged in court. The regulatory frameworks in the United States and Europe are completing the accountability architecture that will govern what follows.

The companies that will emerge from this period with their governance reputations intact, their insurance coverage restored, and their boards protected from personal liability are the ones that treat AI assurance the way they have always treated financial assurance: as a board-level requirement, independently delivered, documented against recognized standards, and renewed on a continuous basis. The GRAIL framework, developed through TrustModel’s strategic alliance with RiskOpsAI, represents the frontier of this posture, moving from point-in-time certification toward continuous AI governance with real-time dashboards monitoring drift, bias, and performance anomalies across the enterprise. This is where governance is going. Organizations that have not yet taken the first step will find it increasingly difficult to explain to regulators and underwriters why they have not done so.

The first step is a QuickScan: a rapid, structured assessment of an organization’s AI exposure across its deployed systems, its commercial off-the-shelf integrations, and its AI agent environment. The QuickScan produces a board-ready output that maps current AI deployment against regulatory and governance requirements, identifies the highest-priority remediation actions, and establishes the documented baseline from which a full TrustModel certification program can proceed. It is, in the precise sense of the term, the conversation that the board needs to have, rendered in the language that boards understand.

Start the Conversation

I work with boards, CFOs, system integrators, and executive leadership teams to lead the AI governance conversation and to bring TrustModel’s integrated assurance capability to the table. If your organization has not yet had a structured discussion about AI insurability, independent AI assurance, or the exposure within your commercial off-the-shelf systems, that conversation is worth starting now, and I would welcome the opportunity to lead it with you.

Connect with me directly on LinkedIn or reach out via hindol@efuturescfo.com to arrange a QuickScan briefing for your board or executive team. The window in which early governance action confers the greatest advantage is precisely the window we are in.

Hindol Datta | CPA, CMA, CIA, MBA | Fractional CFO, TrustModel.ai | hindol@efuturescfo.com

AI-assisted insights, supplemented by 25 years of finance leadership experience.