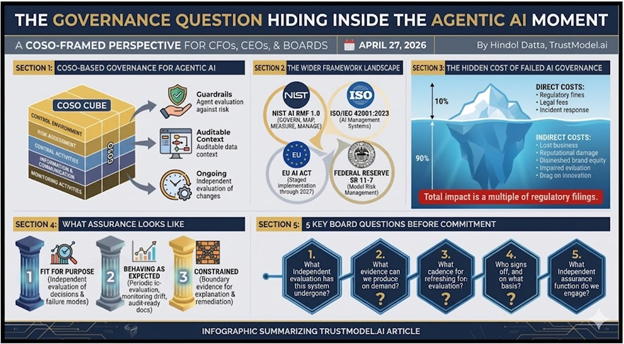

A Framework for Continuous Governance Across AI, Cybersecurity, and ERP

By Hindol Datta | Fractional CFO, TrustModel.ai | May 2026

Most organizations that have invested seriously in a modern information architecture, binding an ERP system to specialist applications and layering artificial intelligence across the whole, believe they have solved a problem that is, in truth, only beginning. The architecture they have built is not a finished product; it is a living system, and living systems obey thermodynamics as surely as they obey the intentions of the people who designed them. Without continuous, disciplined governance, that system will drift away from its intended configuration, and the organization will find itself moving in a direction its leadership did not choose and cannot easily correct. The argument of this piece is that Identity and Access Management and AI Assurance, governed by a formal partnership of accounting, information systems, and legal disciplines reporting to the chief executive and the board, are the mechanisms that keep a cohesive information system aligned with organizational purpose over time, and that every company operating at scale has an obligation to build this governance function, not as an insurance policy against catastrophe, but as the structural precondition for sustained customer value creation.

The Cohesive System: ERP, Specialist Applications, and AI as a Single Organism

The architecture of the modern enterprise is considerably more interconnected than most leadership teams consciously acknowledge, and that gap between what organizations have built and what they believe they have built is itself a governance risk. An ERP system, whether SAP, Oracle, Microsoft Dynamics, or NetSuite, is almost never a standalone platform in any organization of meaningful scale; it is the central repository through which data from dozens of specialist applications flows continuously, with customer relationship management platforms feeding revenue data, procurement systems feeding cost data, human resources platforms feeding workforce data, and logistics systems feeding supply chain data that the finance function then depends on for its most consequential reporting. Artificial intelligence models now sit above this entire architecture, consuming the aggregated data to generate forecasts, flag anomalies, automate decisions that once required human judgment, and surface insights at a speed and volume that no analyst team could replicate.

When this architecture functions well, the results are genuinely transformational. Decisions are made faster and with better information; customer value compounds because the organization can sense and respond to its environment with a precision that was structurally unavailable a decade ago; and the organization’s finite human capacity can be directed toward creative and strategic work rather than toward reconciling imperfect information. The system moves in the right direction because the data flowing through it is trustworthy, the decisions it generates are auditable, and every person and automated process acting on those decisions operates within parameters explicitly authorized by someone in authority.

The mistake that most organizations make, and it is a mistake with consequences that accumulate slowly and then arrive suddenly, is to treat the construction of this architecture as the end of the problem rather than the beginning of a permanent governance obligation. A cohesive information system is not a machine that, once assembled and switched on, continues to produce reliable outputs indefinitely. It is a complex adaptive system, which means it exhibits the properties that complexity theory has documented across biological, economic, and technological domains: emergence, where the behavior of the whole cannot be predicted from the behavior of its components; nonlinearity, where small perturbations in one part of the system produce disproportionate consequences elsewhere; and tight coupling, where the failure of one subsystem propagates faster than any human intervention can contain. These properties do not disappear because the system was well-designed at inception. They are inherent in its nature, and they mean the system will drift unless the organization actively prevents it.

Operational Drift: The Entropy That Every Architecture Accumulates

Operational drift is perhaps the most underappreciated risk in enterprise technology governance, not because it is obscure or difficult to understand, but because it is gradual, undramatic, and easy to defer addressing until the cost of deferral has become very large. It does not announce itself with an incident or a failure; it accumulates across hundreds of small decisions, each individually reasonable and each made under the pressure of organizational life, that collectively move the system away from its intended configuration in ways that nobody has explicitly authorized and that no single person has the visibility to detect.

Consider the specific forms that drift takes in practice, because the abstraction of entropy is less persuasive than the concrete reality of what it produces. An access policy provisioned for a workflow supporting an integration that no longer exists remains active for months or years because deprovisioning was no one’s explicit responsibility, and the cost of investigating it seemed lower than the cost of acting on uncertainty. An artificial intelligence model trained on customer behavior from 2022 continues to generate product recommendations and demand forecasts in 2025 without retraining or revalidation, because the cadence for model governance was never formally established and the outputs have not yet diverged visibly enough to trigger an alarm. An ERP configuration modified during an acquisition integration carries forward assumptions about the acquired entity’s chart of accounts, approval hierarchies, and reporting structures that no longer align with the combined organization’s reality, corrupting the consolidated financial reporting the board relies on to make capital allocation decisions.

Drift is not the exception in complex information systems; it is the thermodynamic default, and only a continuous, disciplined governance function can make alignment the default instead.

The neurological metaphor that the ACE lens offers is instructive here because it captures the systemic nature of drift more precisely than a purely technical description can. The ERP system and its connected specialist applications constitute the spinal cord of the organization, the transmission network through which all operational and financial signals travel between the parts of the business and the leadership that needs to understand them. AI Assurance functions as the brain, the reasoning layer responsible for ensuring that the signals being generated are coherent, directionally correct, and consistent with the organization’s intent. Identity and Access Management functions as the eye, the perception layer that verifies, continuously and not just at moments of audit, that every actor, human or automated, sending and receiving signals through the system, is authorized to do so and is behaving within the boundaries of that authorization. When drift occurs in any of these layers, the organism does not collapse immediately; it begins moving in a direction that diverges, sometimes imperceptibly at first and then with gathering speed, from the direction its leadership believes it is taking.

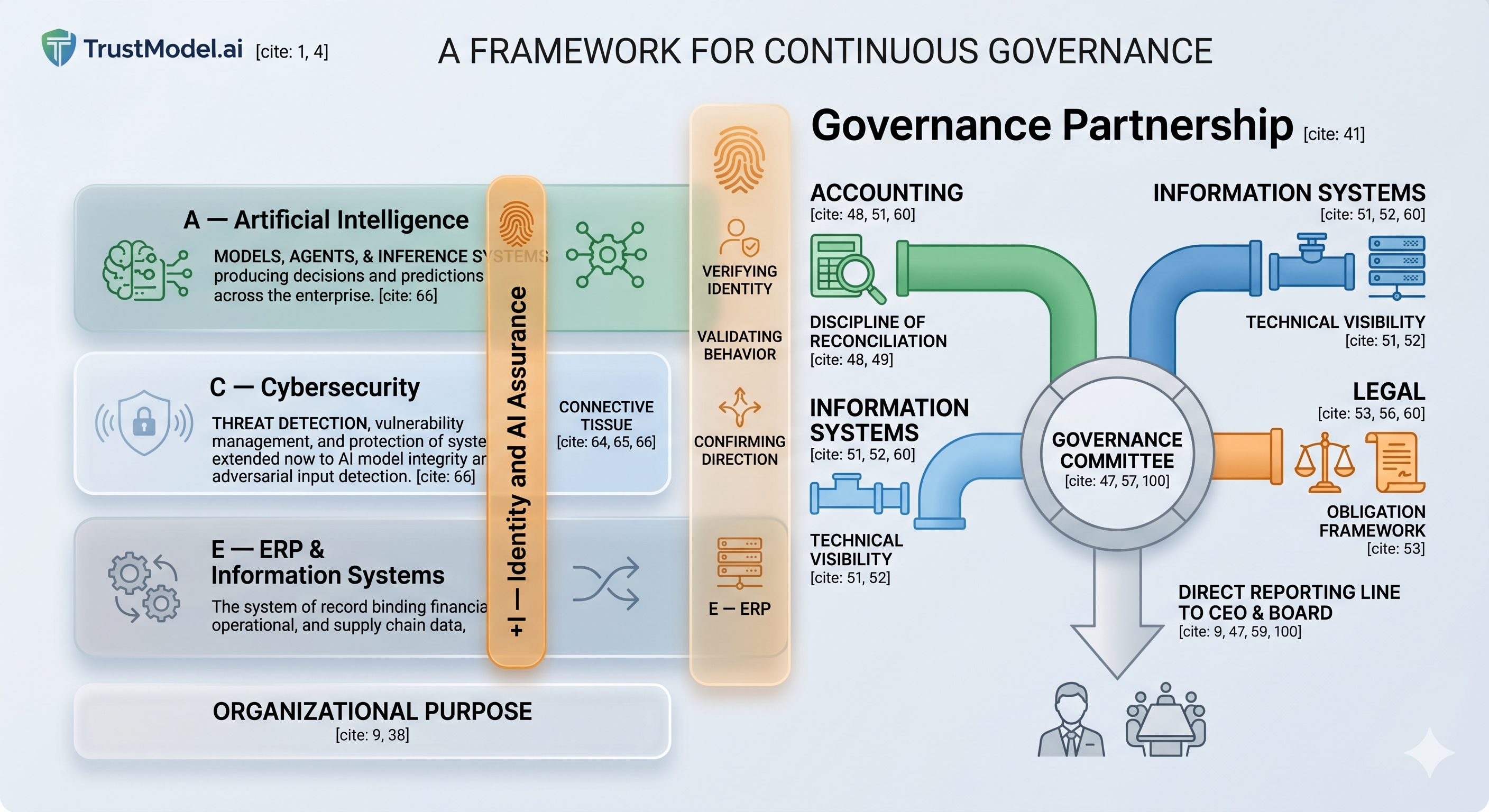

The Governance Partnership: Why Accounting, Information Systems, and Legal Must Work Together

The organizational response to operational drift that most companies default to is to assign monitoring responsibility to the information technology function, and this is, in the author’s view, a significant and costly category error that reflects a misunderstanding of what continuous governance actually requires. The information technology function has the technical visibility to detect configuration drift in systems it manages; it can observe where access policies have proliferated beyond their intended scope, where integration pipelines are degrading, and where AI model outputs have begun to diverge from validated baselines. What it does not have, and cannot be expected to have, is the reconciliation discipline to determine whether what the system says corresponds to observable business reality, or the legal and regulatory knowledge to understand what the organization is obligated to demonstrate and the consequences of failing to demonstrate it. Assigning governance solely to the technology function is like assigning navigational responsibility to the engine room: the engine room can tell you how fast the ship is moving, but it cannot tell you whether the ship is moving toward the right destination.

The governance function that can genuinely arrest operational drift requires a partnership of 3 disciplines that most organizations currently allow to operate in effective isolation from one another, and the establishment of that partnership as an informal committee with a direct reporting line to the chief executive and the board is among the highest-leverage governance investments a leadership team can make.

Accounting brings the discipline of reconciliation, the professional habit developed over decades of practice of asking whether the output the system produces corresponds to a verifiable reality external to the system. Accountants are trained, at a foundational level, to find the gap between what a ledger reports and what is actually true, and that discipline, applied to the governance of information systems more broadly, surfaces drift in data integrity, in reporting accuracy, and in the correspondence between system outputs and the business outcomes that those outputs are supposed to describe. An accounting perspective on system governance asks the question that technology teams rarely ask with sufficient rigor: is what the system is telling us actually true, and how do we know?

Information Systems brings technical visibility that neither accounting nor legal can replicate, the ability to observe at the level of configuration, code, and data pipeline where the system has changed from its intended state, where integrations are degrading in ways that have not yet produced visible business consequences but will, where AI models have begun to operate outside their validated parameters, and where access credentials have accumulated permissions that no longer correspond to current organizational roles and responsibilities. Without this technical layer, the accounting function can identify that something is producing incorrect outputs but cannot locate the source of the error, and the legal function can identify that a regulatory obligation is not being met but cannot specify the required technical remediation.

Legal brings an obligation framework that transforms internal governance from an operational preference into an organizational necessity. Legal knows what the organization is required to demonstrate to satisfy the EU AI Act, the NIST Artificial Intelligence Risk Management Framework, SEC disclosure requirements regarding material AI-related risks, and the contractual commitments the organization has made to customers and partners who rely on the integrity of its information systems. Legal knows the consequences, in terms of regulatory sanction, litigation exposure, and reputational damage, of being unable to produce an auditable record of who authorized an AI system to act and what constraints governed that authorization. Without legal’s framing, the governance committee risks achieving internal clarity while remaining fully exposed to external obligation, which is a version of the same mistake organizations make when they confuse technical monitoring with genuine governance.

Together, these 3 disciplines form a continuous monitoring capability that no single function can sustain independently, and the committee through which they operate need not be large, formally chartered with elaborate bylaws, or staffed by dedicated full-time personnel to be effective. What it requires is a regular cadence, monthly at a minimum and weekly during periods of significant system change or organizational transition; a clear and unambiguous reporting line to the chief executive and the board, with the authority to escalate findings and require remediation without being filtered through layers of operational management that have an interest in minimizing the visibility of drift; and a shared vocabulary that allows accounting, information systems, and legal to describe the state of the system in terms that each discipline can evaluate from its own professional framework. The committee’s mandate is not to prevent change, which would be both impossible and counterproductive, but to ensure that every change to the system’s configuration, access policies, AI models, and integration architecture is intentional, authorized by someone with the appropriate authority, and documented in a way that satisfies the combined requirements of all 3 disciplines.

The ACE + I Framework: Identity and Assurance as Connective Tissue

The ACE framework, which integrates Artificial Intelligence, Cybersecurity, and ERP into a unified vocabulary for the modern enterprise information architecture, provides the structural foundation on which this governance argument rests, but the framework requires an extension that reflects what continuous governance actually demands in practice. Identity and AI Assurance is not a 4th pillar standing alongside the other 3 in a parallel column; it is the connective tissue running through all 3 simultaneously, the medium through which the 3 pillars communicate with one another and through which the governance committee acquires the observability it needs to determine whether the system as a whole is behaving as intended.

| A — Artificial Intelligence | C — Cybersecurity | E — ERP and Information Systems | + I — Identity and AI Assurance (Connective Tissue) |

| Models, agents, and inference systems producing decisions and predictions across the enterprise. | Threat detection, vulnerability management, and protection of systems, extended now to AI model integrity and adversarial input detection. | Models, agents, and inference systems produce decisions and predictions across the enterprise. | The system of record binds financial, operational, and supply chain data, the spinal cord through which all organizational decisions travel. |

When Identity and Access Management and AI Assurance function as connective tissue rather than as a discrete security layer managed separately from the operational architecture, the governance committee gains something that periodic audit cannot provide: continuous observability across the entire system in near real time. It can assess whether the AI model generating the quarterly demand forecast is still operating within the parameters validated at deployment, or whether a data distribution shift has caused its outputs to drift in ways that will corrupt downstream inventory decisions. It can observe whether the service account provisioned for the procurement integration is being used in patterns consistent with its intended scope, or whether it has accumulated permissions that extend its reach into financial systems that were never part of its authorization. It can determine whether the ERP configuration change implemented during the previous quarter’s system upgrade has propagated correctly across the connected specialist applications, or whether it has introduced inconsistencies in the data on which the consolidated reporting depends. Drift, in this model of governance, becomes visible before it becomes consequential, and that temporal advantage is the entire value proposition.

The Return on Investment of Alignment: What Operational Drift Actually Costs

The business case for continuous governance is frequently framed as a risk argument, and that argument is compelling, but it is not the most important one, because risk framing invites organizations to calculate the probability of a bad outcome and discount the investment accordingly. The more important argument is about organizational energy, because the cost of operational drift is not primarily the cost of the catastrophic events it occasionally produces; it is the continuous, compounding cost of the organizational capacity that drift consumes before it produces any visible crisis, capacity that is therefore unavailable for the customer value creation that justifies the organization’s existence.

When operational drift goes unmonitored and uncorrected, the organization’s finite problem-solving energy is progressively consumed by the consequences of the drift itself: reconciliation backlogs created by data inconsistencies that nobody can trace to a specific source; audit remediation work triggered by control gaps that have been accumulating for quarters; system workarounds invented by operational teams to compensate for integrations that have degraded below the threshold of reliability; and incident response efforts directed at security events that continuous monitoring would have detected and contained before they required incident-level attention. This consumption of organizational energy is not visible on any income statement as a discrete line item, which is precisely why leadership teams consistently underestimate it, but its effect on the organization’s capacity for strategic and customer-facing work is as real as any explicitly budgeted expenditure.

The catastrophic cases provide the clearest illustration of what unmonitored drift costs at its extreme. In August 2012, Knight Capital Group deployed a software update that reactivated a legacy trading algorithm that had been dormant for years, a system configuration that had never been formally decommissioned because no governance function had the mandate to ensure that legacy configurations were retired when they became obsolete. Within 45 minutes of deployment, the firm had accumulated 7 billion dollars in unwanted equity positions and had lost 440 million dollars, a loss that destroyed the firm as an independent entity. The root cause was not a software defect in the engineering sense; it was the absence of the governance discipline that would have detected and remediated the dormant configuration before it could cause harm, which is to say it was a governance failure with a catastrophic financial consequence.

At a less catastrophic but far more pervasive scale, the evidence from industry research points consistently in the same direction. Research from Gartner and Panorama Consulting indicates that organizations operating ERP systems with significant configuration drift spend between 20 and 30 percent more annually on maintenance and remediation than organizations with active governance programs, a cost differential that compounds each year the drift goes unaddressed. IBM’s Cost of a Data Breach research demonstrates that breaches originating from misconfigured or compromised identity credentials cost approximately 1 million to 1.5 million dollars more per incident to remediate than breaches detected early through continuous monitoring, a gap that reflects the compounding cost of drift that has been allowed to develop without correction. McKinsey’s research on technology debt indicates that organizations carrying significant unmanaged technical debt allocate between 10 and 20 percent of their engineering and operations capacity to servicing that debt rather than to building customer-facing capabilities, which means that between 1 in 10 and 1 in 5 of every hour worked by the people responsible for the organization’s technology is spent repairing the consequences of past drift rather than creating future value.

The question is not whether the organization can afford continuous governance; it is whether it can afford the compounding cost of the energy that drift will consume in the absence of governance, and whether its leadership is prepared to explain to its board why that energy was not protected.

The return-on-investment calculation, stated without the softening that characterizes most governance recommendations, is asymmetric in a way that should make the decision straightforward. The annual cost of a well-designed continuous monitoring function, comprising the governance committee’s cadence, the tooling required for Identity and Access Management and AI Assurance observability, and the documentation discipline that satisfies the combined requirements of accounting, information systems, and legal, is measurable, bounded, and predictable. The cost of a single significant drift event, whether a regulatory finding that requires public disclosure, an AI model that has been operating outside its validated parameters for 6 months and has corrupted a full cycle of business planning, or an ERP configuration error that requires restatement of financial results, is unbounded, frequently existential in its reputational consequences, and almost always vastly larger than the cumulative cost of the governance that would have prevented it. Organizations that decline to invest in continuous governance are not making a fiscally prudent decision; they are making an uninformed one, and the misinformation usually stems from failing to calculate what drift is already costing them.

What Every Organization Is Now Obligated to Build

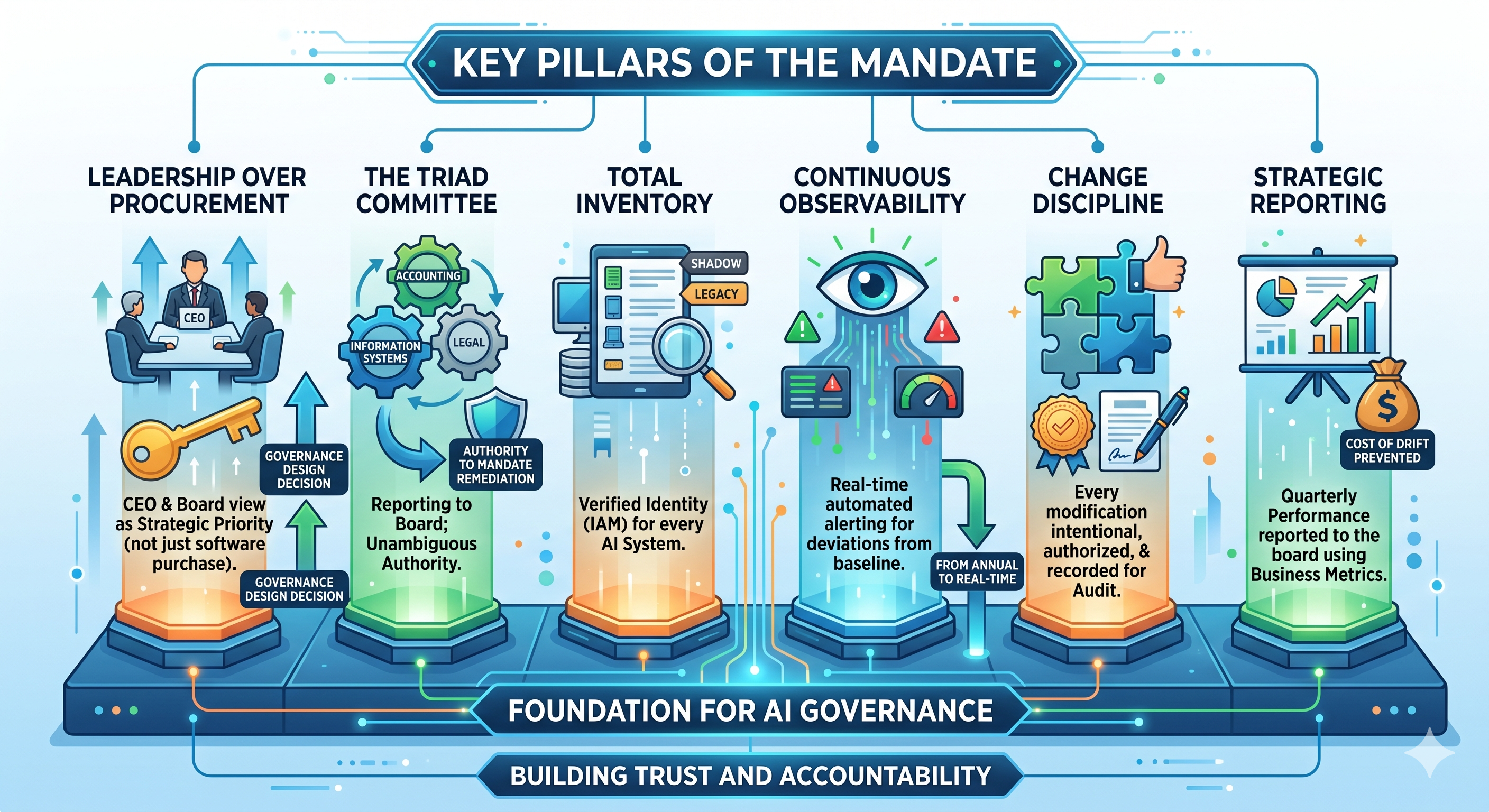

The mandate that follows from this argument is not a technology procurement recommendation, and organizations that treat it as one will make the predictable mistake of purchasing monitoring tools without establishing the governance function that gives those tools their purpose. The mandate is a governance design decision, which means it begins with leadership and with the explicit commitment of the chief executive and the board to treat the continuous alignment of the information architecture as a strategic priority rather than an operational detail delegated to the technology function.

- The accounting, information systems, and legal governance committee should be established with a formal and unambiguous reporting line to the chief executive and the board, a monthly operating cadence with the authority to convene more frequently during periods of significant system change, and the explicit mandate to require remediation when drift is detected, without that requirement being subject to negotiation with the operational teams whose work the remediation will disrupt.

- Every AI system currently operating within the organization’s information architecture, including shadow deployments that were never formally sanctioned and legacy configurations that predate current governance standards, should be inventoried and assigned a verified identity within the Identity and Access Management framework, because a system that cannot be identified cannot be governed.

- Continuous monitoring for Identity and Access Management and AI Assurance should be implemented at a cadence that reflects the speed at which drift accumulates in the organization’s specific architecture, which in most cases means near real-time automated alerting for deviations from baseline configuration rather than quarterly or annual review cycles that allow months of drift to compound before anyone observes it.

- A change governance discipline should be established that requires every modification to the system architecture, whether a configuration change, a model update, an integration revision, or an access policy amendment, to be intentional, authorized by someone with documented authority to grant that authorization, and recorded in a way that satisfies the audit and regulatory requirements that the legal function has identified.

- The state of system alignment should be reported to the board at least quarterly, using metrics expressed in business language rather than technical language, covering the volume of access policies reviewed and remediated in the period, the percentage of AI models currently operating within their validated parameters, the number of integration anomalies detected and resolved before they produced downstream consequences, and the estimated cost of the drift that was prevented

Direction, Not Just Motion: The Case for Treating Governance as a Strategic Investment

The goal of the cohesive information system is not, in the end, motion, because every organization is in motion regardless of how well or poorly its systems are governed, and motion without direction is simply a more energetic form of being lost. The goal is motion in the right direction, toward the customer value that justifies the organization’s investments, toward the operational excellence that makes those investments compound over time, and toward the strategic clarity that allows leadership to make consequential decisions with confidence in the integrity of the information on which those decisions rest. Identity and Access Management and AI Assurance, governed through the formal partnership of accounting, information systems, and legal, and reported through a direct line to the chief executive and the board, are the mechanisms that ensure the direction remains true even as the system grows more complex, absorbs more data, deploys more AI capabilities, and is subjected to the entropy that no architecture, however well designed, can permanently escape.

The author’s strong conviction, formed across 25 years of observing how organizations succeed and fail in the governance of their information systems, is that the companies that will create the most durable competitive advantage in the decade ahead are not necessarily those that deploy AI the fastest or build the most sophisticated integrations; they are the ones that govern their information architecture with enough discipline to ensure that the intelligence those systems generate remains trustworthy as the architecture evolves. Speed without governance is acceleration in an unknown direction, and unknown directions in complex systems tend to produce outcomes nobody intended and that are very expensive to correct.

Against drift is therefore not a defensive posture, not a counsel of caution addressed to organizations worried about what might go wrong. It is an argument for the organizational discipline that makes it possible to invest the organization’s energy in the initiatives that matter, the customer experiences that differentiate, the operational innovations that compound, and the analytical capabilities that create advantage, rather than in the remediation of the drift that an ungoverned system will inevitably accumulate. Every unit of energy that continuous governance redirects from repair toward creation is a unit of energy that builds the organization’s future rather than services its past. The governance committee that reports to the chief executive and the board on the state of the information architecture is not an overhead function; it is one of the most important investments in customer value that a leadership team can authorize, and organizations that recognize this early will have a meaningful and durable advantage over those that recognize it late.

If you are reading this and asking whether your organization’s information architecture is drifting in ways that have not yet become visible, that question is worth pursuing. Hindol Datta and the team at TrustModel.ai work with executives and boards on exactly this challenge. Reach out at hindol@trustmodel.ai or on LinkedIn to begin the conversation.

AI-assisted insights, supplemented by 25 years of finance leadership experience.