By Hindol Datta, CPA, CIA (Certified Internal Auditor) | Fractional CFO | AI Governance Advisor

The Statistician Who Asked the Wrong Question First

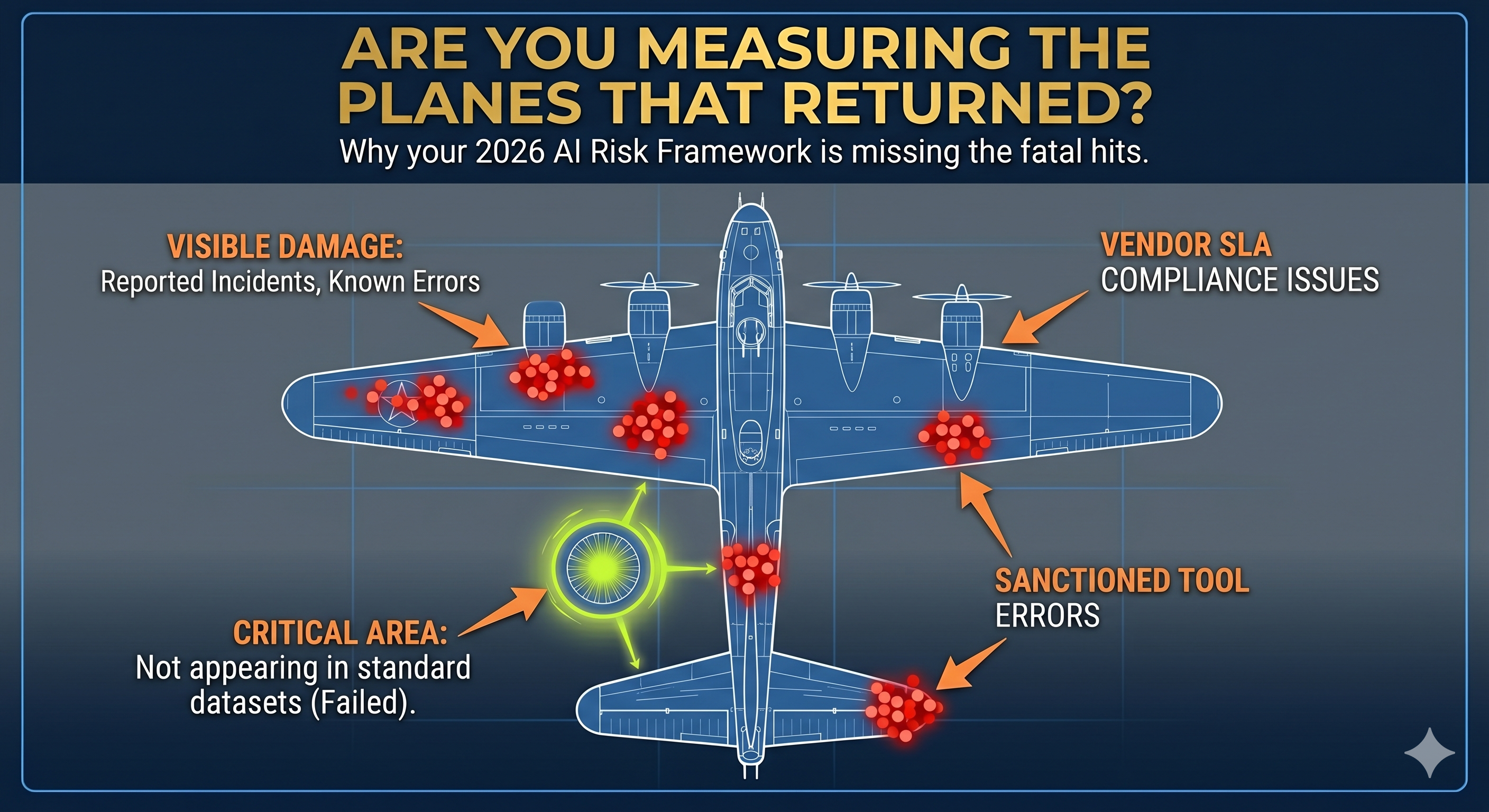

During the Second World War, the United States military brought together a group of mathematicians and statisticians to address a problem that seemed, on its surface, to have an obvious solution. Allied bombers were returning from missions over Europe riddled with bullet holes, and the military wanted to know where to add reinforcing armor to reduce losses. The analysts examined hundreds of returning aircraft and carefully mapped the damage. The wings were heavily hit. The fuselage was heavily hit. The tail sections showed consistent patterns of enemy fire. The engines, by contrast, showed almost no damage.

The intuitive recommendation was straightforward: reinforce the wings, fuselage, and tail because that is where the planes were being hit. Abraham Wald, a Romanian-born statistician working with the Statistical Research Group at Columbia University, looked at the same data and asked a question that reframed the entire problem. He asked what the data was not showing. The planes that had been hit in the engines, he reasoned, were not in the dataset because they had not returned. The absence of engine damage in the surviving aircraft was not evidence that the engines were safe. It was evidence that engine damage was fatal. The military had been studying survivors and drawing conclusions that applied to casualties.

Wald recommended reinforcing the engines. The logic was precise, the evidence was inferential, and the recommendation ran directly counter to what the visible data suggested. It was also correct.

The most dangerous assumption in any complex system is that the data you can see is the data that matters most.

This principle, formalized under the concept of survivorship bias, has implications that extend far beyond military aviation. In business, organizations consistently study the visible patterns of successful competitors while remaining structurally blind to the silent failures of firms that pursued identical strategies and disappeared from the dataset entirely. In investing, the performance records of surviving funds systematically overstate the returns available to investors because failed funds are no longer reporting. In AI governance, the implications are more acute and more immediate than in almost any other domain, because the data that enterprise leadership is currently using to assess AI risk is subject to a survivorship bias so profound that it is inverting the actual risk landscape.

What Enterprise AI Risk Frameworks Are Actually Measuring

The standard enterprise AI risk framework in 2026 is built around incidents. A model produces a discriminatory output, and the incident is logged. An AI agent misclassifies a transaction, and the error is caught in reconciliation. A chatbot generates defamatory content, and the legal team is notified. Each of these events is entered into the risk register, analyzed, remediated, and reported to the audit committee as evidence that the AI governance framework is functioning. The framework is measuring the returned planes.

What it is not measuring is considerably more consequential. It is not measuring the hiring decisions made by an AI screening system that produced discriminatory outcomes for the applicants who never received a response and never filed a complaint. It is not measuring the procurement approvals influenced by an AI forecasting model that consistently undervalued vendor risk in ways that did not produce a single flagged transaction, but gradually concentrated the organization’s supply chain exposure in ways that will only become visible during the next disruption. It is not measuring the financial planning outputs generated by a model trained on historical data that systematically excluded the market conditions most relevant to the current environment.

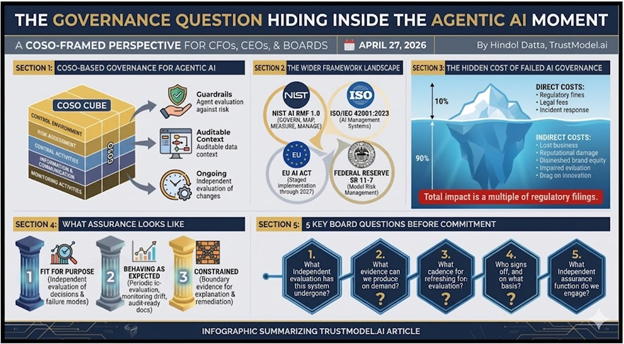

The following table illustrates the structural gap between what standard AI risk frameworks capture and what their architecture is designed to miss.

| Risk Category | What Current Frameworks Measure | What Current Frameworks Miss |

| Model Output Errors | Flagged incidents post-deployment | Systematic bias in unflagged outputs across the full decision population |

| COTS AI Behavior | Vendor SLA compliance metrics | AI decision logic embedded within purchased systems such as Workday, SAP, and Salesforce |

| Agentic Actions | Completed and logged transactions | Near-misses, unauthorized attempts, and goal-drift episodes that left no audit trail |

| Training Data Quality | Data lineage documentation | Synthetic data contamination and progressive model drift below detection thresholds |

| Shadow AI Usage | IT-sanctioned tool inventory | Departmental deployments outside governance scope, now cited by 76% of organizations as a definite or probable problem |

| Regulatory Exposure | Known compliance breaches | Latent liability in unaudited workflows and COTS-embedded AI decision-making |

| Insurance Coverage | Policy terms as originally written | Exclusion clauses activated by AI deployment that narrowed or eliminated coverage retroactively |

The pattern across every row is identical. The framework measures the returning aircraft. The most consequential risk lives in the category that never made it back to the dataset.

The Three Survivorship Biases Distorting Your AI Risk Picture

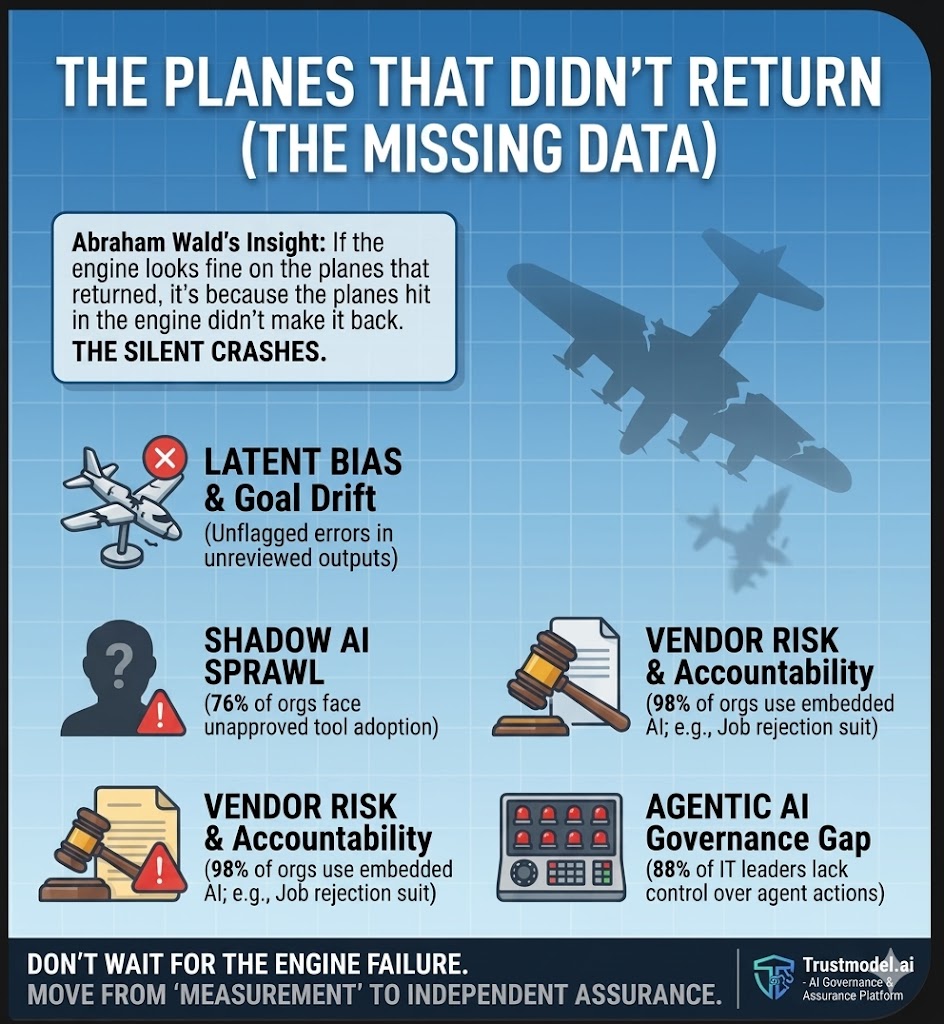

The Incident Reporting Bias

Enterprise AI risk registers are populated by incidents that were detected, attributed to AI, and escalated through existing reporting channels. Each of these conditions is a filter, and each filter removes a category of risk from the visible dataset. Detection requires that someone or some system notice the anomaly. Attribution requires that the anomaly was connected to an AI system rather than a human decision or a process failure. Escalation requires that the person who noticed it had both the organizational standing to raise it and the incentive to do so.

The Gallagher Re and MIT research published in early 2026 documented a 978 percent increase in generative AI-related lawsuits between 2020 and 2025, with AI-related litigation surging a further 140 percent in 2025 alone. That figure represents the incidents that cleared all three filters, were pursued by plaintiffs with sufficient resources and evidence, and resulted in formal legal action. The denominator of that fraction, the total universe of AI-related harms that did not clear all three filters, is not in any dataset that enterprise risk managers are currently consulting. IBM’s 2026 X-Force Threat Intelligence Index adds a further dimension: 31 percent of organizations do not know whether they experienced an AI security breach in the past 12 months. The absence of an incident report is not evidence of an absence of incidents. It is evidence of an absence of detection.

The Vendor Accountability Bias

When an enterprise deploys a commercial off-the-shelf system that embeds AI into its workflows, the standard governance assumption is that the vendor bears primary accountability for the AI’s behavior. The contract assigns liability to the vendor. The procurement process validates the vendor’s compliance certifications. The ongoing relationship is managed by a vendor management function that monitors SLA performance rather than AI decision quality. Yet according to research published in 2026, 98 percent of organizations use at least one third-party SaaS application with embedded AI capabilities, while fewer than 30 percent have a formal AI vendor risk assessment process in place.

The Mobley versus Workday litigation, in which a federal court in the Northern District of California certified a nationwide collective action covering 1.1 billion rejected job applications processed by Workday’s AI screening system, has begun to dismantle the vendor accountability assumption with considerable legal force. The court’s determination that employers who enabled Workday’s AI features are themselves exposed to discrimination claims and document subpoenas reaches back to September 2020. The vendor accountability bias led enterprise leadership to believe that the AI risk in their Workday implementation was Workday’s. The federal judiciary is in the process of explaining that this conclusion was a survivorship error: it was based on a dataset of cases that had not yet reached a courthouse.

The Coverage Assumption Bias

The third survivorship bias operating inside most enterprise AI risk frameworks is the assumption that existing insurance coverage extends to AI-related liabilities. This assumption has never been tested against the actual policy language in most organizations, because the claims that would test it have not yet been filed. Berkshire Hathaway, Chubb, and Travelers have now made the Wald inference explicit. They have examined the pattern of AI-related claims, estimated the distribution of damage among cases that have not yet been filed, and concluded that the engines are being hit. Their response has been to exit the risk category entirely, obtaining regulatory approval across every state in the United States to exclude AI-related damages from standard corporate liability policies, with regulators approving more than 80 percent of those requests. The standard cyber insurance policy, which most CFOs have treated as the fallback position, explicitly does not cover first-party liabilities arising from a company’s own AI outputs.

The Heisenberg Problem: When Measurement Creates False Certainty

Werner Heisenberg’s uncertainty principle, developed in the context of quantum mechanics, holds that the more precisely you measure the position of a particle, the less certainty you have about its momentum, and vice versa. The act of measurement does not neutrally observe a system. It interacts with the system in ways that alter what can be known about it. Applied to enterprise AI governance in 2026, the principle describes a condition that is both empirically documented and operationally dangerous: the organizations that believe they have the most complete picture of their AI risk are frequently the ones operating with the greatest unmeasured exposure, because the confidence that accompanies apparent measurement completeness reduces the intensity of the scrutiny that would reveal what is actually missing.

Grant Thornton’s 2026 AI Impact Survey, based on responses from 950 business executives, found that 78 percent of respondents lack confidence that they could pass an independent AI governance audit within 90 days. That figure is alarming on its face. What is more alarming is its inverse: the 22 percent who express strong confidence. The research finds that organizations do not drift into governance confidence by building robust governance infrastructure. They arrive at it through a process of measurement that, like the military analysts examining bullet holes in surviving aircraft, systematically excludes the population that would challenge their conclusions. None of the 28 organizations at the earliest stage of AI exploration in the Grant Thornton survey expressed strong confidence in their ability to pass an AI governance audit. Proof at the earliest stages is not low. It is nonexistent. Confidence is not correlated with actual governance quality. It correlates with the length of time an organization has been measuring the wrong things without consequences.

The organizations that are most certain about their AI risk posture are, by the logic of how AI systems behave, the ones most likely to be wrong.

The AI inventory illusion provides the most concrete illustration of the Heisenberg problem in enterprise AI governance. Research published in 2026 found that while 86 percent of organizations claim to maintain a complete AI inventory, these inventories typically reflect only approved tools and sanctioned use cases. Unapproved tools, embedded AI features within SaaS platforms, and employee-driven adoption consistently fall outside the scope of these inventories, creating blind spots where sensitive data is being exposed and consequential decisions are being made without detection or control. The act of compiling an AI inventory creates a documented sense of completeness that makes the most important category of AI risk, the AI that does not appear in the inventory, even harder to surface. The measurement has altered the system it was designed to observe.

The agentic AI governance gap quantifies this dynamic at an extraordinary scale. A 2026 survey of 1,879 IT leaders found that 97 percent of organizations are already exploring agentic AI strategies, and 49 percent describe their capabilities as advanced or expert. Yet only 12 percent use a centralized platform to maintain control over AI sprawl. That 82-point gap between stated awareness and operational control is not a gap in intention. There is a gap in measurement architecture: the instruments needed to reveal the true extent of exposure do not yet exist in 88 percent of organizations that believe they understand their agentic AI risk.

The operational consequences of this measurement gap have already been documented. In one case cited by a chief information security officer at a major technology solutions provider, an AI-driven system at a beverage manufacturer failed to recognize its own products after the company introduced new holiday labels. Because the system interpreted the unfamiliar packaging as an error signal, it continuously triggered additional production runs, producing several hundred thousand excess cans before anyone identified the source of the problem. The system had not malfunctioned in a traditional sense. It had behaved with complete internal logic, doing exactly what it was instructed to do rather than what the organization intended. The gap between those two states was invisible until the damage had already accumulated because the measurement system in place was designed to detect malfunctions, not goal drift.

The following table maps the Heisenberg dynamics currently operating inside standard enterprise AI governance frameworks.

| Governance Measurement | What Confident Organizations Believe It Shows | What It Actually Obscures |

| AI Inventory Completion | 86% claim complete AI inventory | Unapproved tools, embedded SaaS AI, and employee-driven adoption outside the scope |

| Incident Report Volume | Low incident counts signal effective governance | 31% of organizations do not know if they experienced an AI breach in the past 12 months |

| Vendor Compliance Certification | SOC reports and SLAs confirm vendor AI is governed | AI decision logic inside vendor systems is not covered by SLA monitoring |

| Agentic AI Awareness | 97% of organizations are exploring agentic AI strategies | Only 12% have centralized control; an 85-point governance gap is unmeasured |

| Cyber Policy Coverage | Existing policy extends to AI-related incidents | Standard cyber policies exclude first-party AI output liability; exclusions are now regulatory-approved |

| Internal Audit Coverage | Annual AI risk review completed and filed | Point-in-time audit cannot detect real-time model drift, goal-drift, or shadow AI emergence |

| Governance Confidence | 22% of executives express strong audit readiness | Confidence is uncorrelated with actual governance quality; no organization at the pilot stage passes the audit |

What the Missing Data Is Actually Telling You

The Wald framework produces a specific and actionable inference: the areas showing the least damage in your current risk dataset are the areas most likely to be generating fatal hits that are not returning to the dataset. The Heisenberg layer adds a second inference: the areas in which your organization feels most confident about its governance coverage are the areas most likely to contain the measurement blind spots that confidence has made invisible. Applied together to enterprise AI governance in 2026, these two frameworks produce a risk map that looks very different from the one most boards are currently reviewing.

The Allianz Risk Barometer for 2026 documents the momentum of this risk with unusual clarity. AI has risen from the number 10 position in the Barometer to number 2 in a single year, the single largest jump in the survey’s history, and it now ranks in the top three concerns for large, mid-sized, and smaller firms simultaneously across every major global region. The velocity of that movement is itself a Heisenberg signal: the precision with which organizations can measure their current AI risk position is inversely related to the speed at which the risk landscape is moving. By the time an annual AI risk review has been completed, validated, and reported to the audit committee, the momentum of the exposure it was designed to capture has already moved to a new position.

The SEC’s 2026 examination priorities have introduced a further dimension that most boards have not yet integrated into their AI risk frameworks. AI washing, defined as claiming to use AI to enhance services without doing so, or, conversely, understating AI exposure in regulatory disclosures, now carries compliance risks, including false and misleading statements, operational and governance risks, and direct exposure to regulatory sanctions. The organizations that have been most confident about their AI governance posture, and therefore most willing to make affirmative statements about it in investor communications and regulatory filings, may be the ones carrying the greatest undisclosed liability in the SEC’s current examination cycle.

The Independent Assurance Answer

Abraham Wald’s insight was not primarily statistical. It was architectural. He recognized that the data collection system itself was introducing a bias that made the most important information invisible to the analysts working within it. The solution was not to collect more data from the same system. It was to change the architecture of the inquiry so that the missing population could be inferred and acted upon. The Heisenberg corollary is that the organizations most likely to resist this architectural intervention are those that have built the most internally consistent measurement systems, because internal consistency breeds the confidence that makes independent verification seem unnecessary.

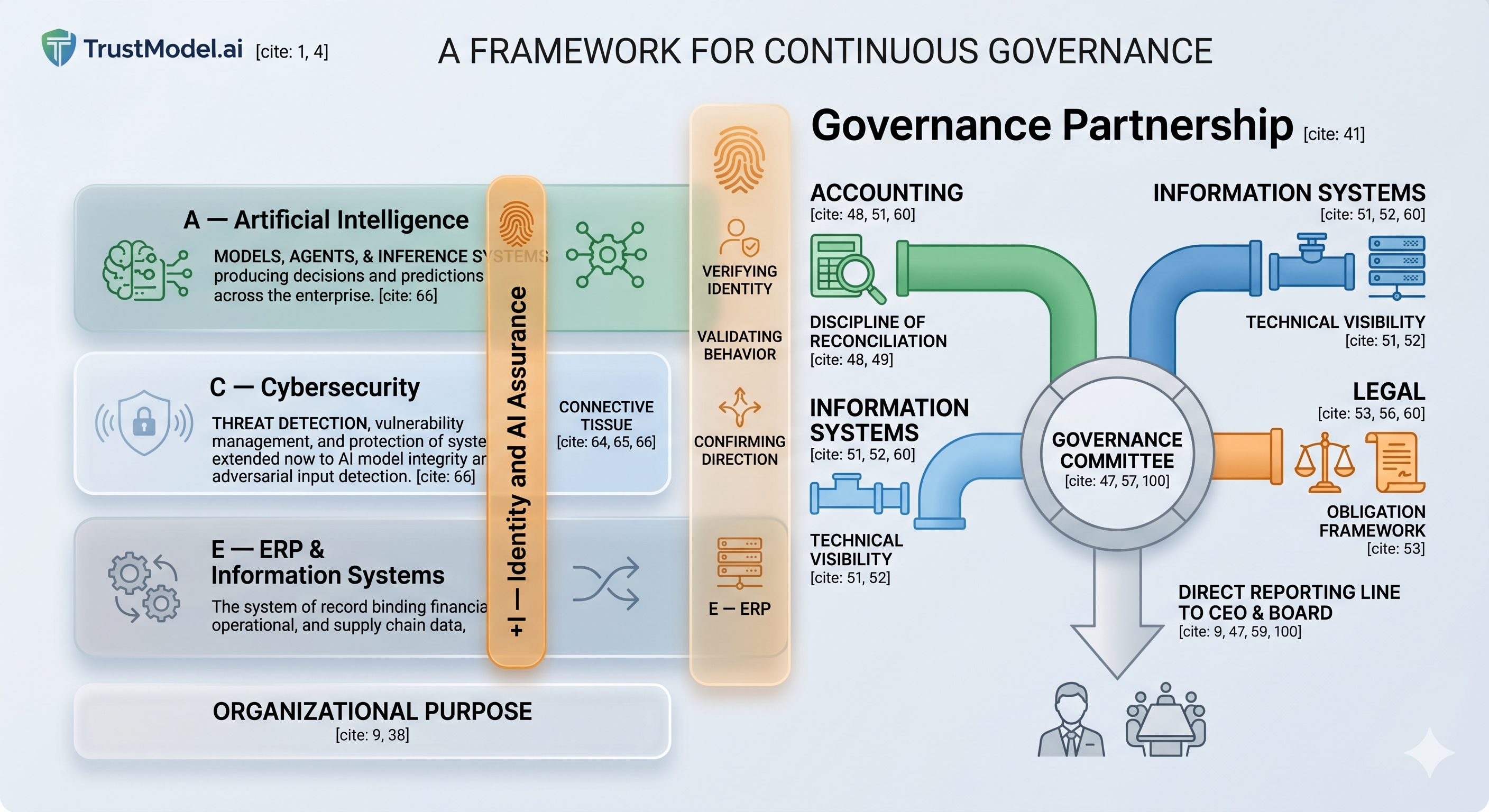

The enterprise AI governance equivalent of Wald’s architectural intervention is independent assurance: a third-party evaluation of AI systems that is not filtered through the organization’s incident-reporting channels, vendor-accountability assumptions, coverage beliefs, or inventory definitions. Independent assurance does not ask what went wrong and was reported. It asks what the system is doing across the full distribution of its outputs, including outputs that were never reviewed, decisions that were never questioned, autonomous actions that were never escalated, and shadow AI deployments that were never included in the approved tools inventory.

This is the function that external auditors perform for financial statements, and the reason their independence is a structural requirement rather than a courtesy. Management’s internal controls are necessarily subject to the same biases that Wald identified in the aircraft damage data. The external auditor’s mandate is to make the missing population visible. The SOC 2 certification standard established this architecture for cybersecurity governance in the previous decade. The enterprises that obtained SOC 2 certification early did not merely reduce their cyber insurance premiums. They made themselves insurable in a market that was beginning to exit the risk category, and they built the documented evidence base that regulators, investors, and counterparties subsequently required. The AI assurance certification frameworks of 2026 occupy the same structural position, at the same moment in the market cycle, with the same window of early-mover advantage available to organizations that move before it closes.

The Board Question That Changes Everything

The question that Abraham Wald asked, the one that reframed the entire problem, was deceptively simple: where are the planes that did not return? The Heisenberg addendum is equally important: how does the confidence your measurement system produces affect your ability to ask that question at all? The board-level equivalent for AI governance in 2026 combines both: what AI decisions are we making at scale that we have never individually reviewed, and whether our current measurement architecture has made us confident precisely where we should be most uncertain.

The answer to that question, pursued rigorously and independently through a structured third-party assessment, is the beginning of genuine AI governance. Everything that precedes it is a risk framework built on data from the surviving aircraft, administered by a measurement system that produces confidence in proportion to its incompleteness. The organizations that will emerge from this period with their governance reputations intact, their insurance coverage preserved, and their boards protected from personal liability are the ones that treat AI assurance the way they have always treated financial assurance: as a board-level requirement, independently delivered, documented against recognized standards, and renewed on a continuous basis rather than confirmed by an annual review that measures only the evidence it was designed to find.

Start the Conversation

I work with boards, CFOs, and executive leadership teams to lead the AI governance conversation and to bring independent AI assurance capability to the table. If your organization has not yet commissioned a structured, third-party assessment of its AI exposure across its deployed systems, commercial off-the-shelf integrations, and agentic AI environment, a QuickScan briefing will provide your leadership team with a board-ready evaluation of your current risk posture in a format your audit committee will immediately recognize.

Connect with me directly on LinkedIn or reach out via hindol@efuturescfo.com to arrange a QuickScan briefing for your board or executive team. The window in which early governance action confers the greatest advantage is precisely the window we are in.

Hindol Datta | CPA, CIA (Certified Internal Auditor), CMA, MBA | Fractional CFO, TrustModel.ai | hindol@efuturescfo.com

#AIGovernance #AIAssurance #AIRisk #SurvivorshipBias #BoardGovernance #FiduciaryDuty #CFO #GeneralCounsel #InternalAudit #AuditCommittee #EnterpriseRisk #EUAIAct #NIST #RiskManagement #SystemsCFO #eFuturesCFO #TrustModelAI #Assurance

AI-assisted insights, supplemented by 25 years of finance leadership experience.