A COSO-framed perspective for CFOs, CEOs, and Boards, as Google Cloud Next 2026 puts autonomous systems on center stage

This week in Las Vegas, Google Cloud Next 2026 opens with agentic artificial intelligence as its organizing theme. Thomas Kurian titled his keynote “The Agentic Cloud,” and the headline announcement was the Gemini Enterprise Agent Platform, positioned as the connective tissue for building, scaling, governing, and optimizing agents at enterprise scale. The numbers Sundar Pichai shared from the keynote stage capture the velocity of the moment. 75 percent of Google Cloud customers are now using its AI products. The company is processing 16 billion tokens per minute through direct customer API use, up from 10 billion only one quarter earlier. Capital expenditure is projected at 185 billion dollars in 2026, against 31 billion four years earlier. The messaging is consistent across Google, the hyperscaler ecosystem, and the system integrators around them. Agents are moving from pilot to production. Orchestration layers are replacing copilots. Data platforms are becoming reasoning surfaces. One featured session, led by Fei-Fei Li, names the problem directly: the human bottleneck, and why great technology fails when oversight cannot keep pace.

For technology leaders, these are exciting questions. For CFOs, CEOs, and boards, there are questions of accountability. When a system acts autonomously inside the enterprise, who bears responsibility for its decisions? When a regulator, auditor, or shareholder asks how a particular outcome was produced, what evidence can leadership produce? These are the governance questions that will decide which organizations realize durable value from autonomous systems and which end up writing costly consent decrees.

COSO was built for this moment

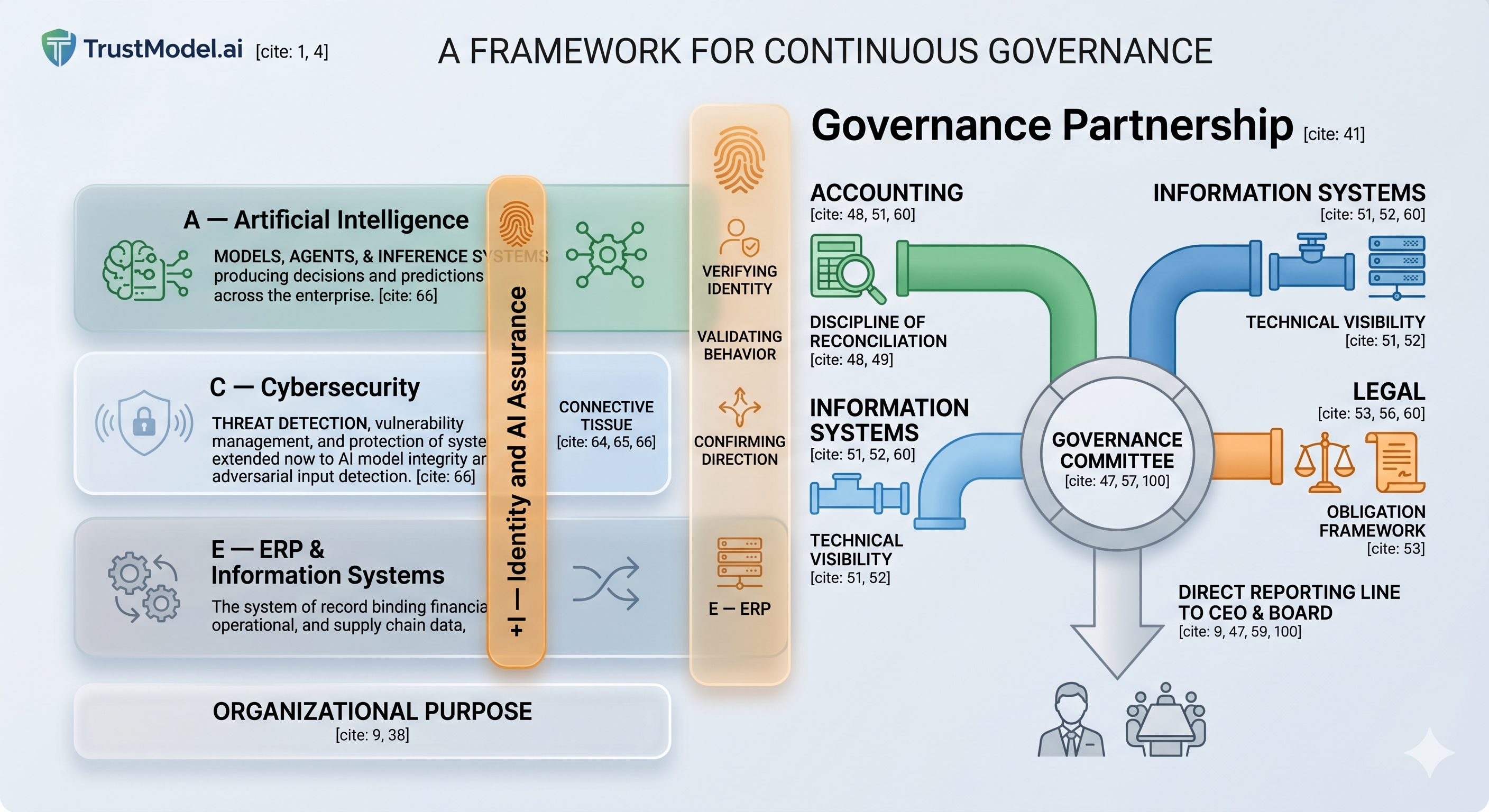

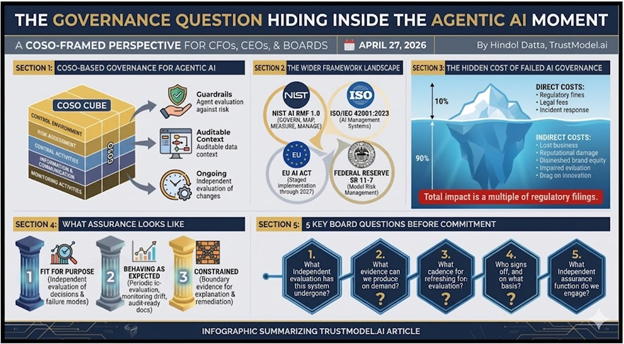

The Committee of Sponsoring Organizations of the Treadway Commission codified its internal controls framework in 1992 and updated it in 2013. Its five components, Control Environment, Risk Assessment, Control Activities, Information and Communication, and Monitoring Activities, constitute the core framework through which organizations showcase their internal control over financial reporting and operational risk management. COSO was not written with autonomous AI systems in mind. And yet its components describe exactly the discipline that agentic systems require. Consider the procurement agent Unilever announced from the Next 2026 stage, which compresses procurement decision cycles from days to minutes. An agent of that kind initiates a vendor evaluation, applies a sourcing decision, and triggers downstream commitments. It is executing a control activity. The question is not whether COSO applies. The question is whether leadership has extended COSO discipline to the autonomous layer or left it in a governance vacuum.

Three COSO principles that agentic systems stress-test immediately

Principle 10, Control Activities. COSO requires control activities that mitigate risks to objectives. When those controls are executed by autonomous agents, the mitigation is only as strong as the agent’s design, training data, and guardrails. Google itself acknowledged this at Next 2026 by announcing Agent Registry, Agent Identity, Agent Gateway, and Agent Observability as governance primitives within the Gemini Enterprise Agent Platform. These are necessary, but they are vendor-supplied controls. They are not a substitute for independent evaluation against the specific risks that matter inside the organization. A control performed by software that has not been independently evaluated is not one that leadership can attest to. Before any autonomous system executes a control activity, the organization should be able to produce evidence of the system’s evaluation against the specific risk it is supposed to mitigate.

Principle 13, Information and Communication. COSO requires relevant quality information to function as internal control. Agentic systems draw on context from data platforms, knowledge graphs, and memory stores that were not part of the original control design. Google’s announcement of the Agentic Data Cloud at Next 2026 made this concrete. The new Knowledge Catalog continuously enriches enterprise data, autonomously extracting entities and mapping relationships, while a cross-cloud Lakehouse provides agents with seamless access to data wherever it resides. This is the information surface from which agents will draw their context. The COSO question is whether that surface is itself auditable. Can the organization demonstrate what information the agent was acting on, whether it was current, and whether the data informing the decision was itself subject to appropriate controls? When the catalog auto-enriches without human review, the integrity of every downstream control depends on that pipeline.

Principle 16, Ongoing and Separate Evaluations. COSO requires an evaluation of whether control components are present and functioning. Autonomous systems change continuously. Google is retiring the Gemini 2.5 generation in favor of the 3.x line, with Gemini 3 Pro, Gemini 3 Flash, and Gemini 3.1 Pro already released, and Gemini 3.2 expected during the conference. Each model swap is, in COSO terms, a change in the control environment. Add to that the modifications customers make to prompts, the tool integrations they enable through managed MCP servers, and the guardrails they adjust through Model Armor, and the change cadence is no longer annual. It is continuous. Leadership should demonstrate that independent evaluation accompanies each material change, rather than being conducted once at deployment and never again.

The wider framework landscape boards should recognize

COSO provides the spine, but it does not stand alone. Four additional frameworks now shape the governance conversation and belong on the board agenda. It is also worth noting that Google published its 2026 Responsible AI Progress Report in February, which describes a seven-layer governance model spanning the AI lifecycle. The report is a useful vendor reference and a credible signal that the platform provider takes governance seriously. It is not, however, a substitute for the independent, COSO-disciplined evaluation that audit committees and external auditors will require.

NIST AI Risk Management Framework (AI RMF 1.0). Published by NIST in January 2023, the AI RMF organizes AI risk into four functions (Govern, Map, Measure, Manage) and has become the de facto U.S. reference framework, increasingly cited by federal regulators and in state-level AI legislation.

ISO/IEC 42001:2023, AI Management Systems. The international management systems standard for AI was published in December 2023. It is the AI equivalent of ISO 27001, and Big Four audit firms are building attestation practices around it.

The EU AI Act. In staged implementation since 2024. Unacceptable-risk prohibitions took effect in February 2025; general-purpose AI obligations phased in August 2025; high-risk requirements continue through 2026 and 2027. For any organization with European customers, employees, or data subjects, the Act is already live.

Federal Reserve SR 11-7, Model Risk Management. Issued in 2011 for banking models, SR 11-7 reads now as if it were drafted for AI: independent validation, ongoing monitoring, documented limitations, change management, and effective challenge. For financial services boards, the governance discipline for AI is not new. It is the extension of a discipline banking regulators have required for more than a decade.

When autonomous systems fail: what boards should learn from the record

The cost of failed governance is not theoretical. The table below summarizes four publicly documented cases from the last several years, spanning hiring, lending, real estate, and capital markets. Specific companies are not named; the pattern is what matters.

| Sector / System Type | What Went Wrong | Direct Cost Range | Reputational and Indirect Impact |

| Hiring, an algorithmic screening tool at an education services company | AI screening automatically rejected female applicants over 55 and male applicants over 60, screening out more than 200 qualified candidates before human review. | Regulatory settlement in the low six figures, plus mandated process remediation and governance system build-out. | First-ever regulatory enforcement action of its kind, establishing the precedent now cited in every subsequent AI hiring case. Ongoing class-action exposure for employers using similar tools. |

| Consumer lending, AI-driven underwriting at a national student loan company | The algorithmic underwriting model produced a disparate impact on minority and non-citizen applicants. Variables correlated with protected characteristics were used without adequate fair-lending testing. | State attorney general settlement in the low millions, plus mandated fair-lending testing, internal controls program, and ongoing compliance reporting. | First significant state-level enforcement of AI underwriting discrimination. Cited across the lending industry as the reference case. Required creation of an independent algorithmic oversight function. |

| Real estate, algorithmic property valuation at a major technology-driven marketplace | An automated valuation model overestimated home prices in volatile markets, prompting the company to purchase thousands of homes at prices it could not recoup on resale. | Inventory write-downs in the hundreds of millions. Full business-unit shutdown. Workforce reduction of approximately 25 percent across the company. | Market capitalization declined by several billion dollars within days of disclosure. Multi-year impairment of the company’s flagship valuation product. Cited across the technology industry as the case study in over-trusted AI. |

| Capital markets, algorithmic trading system at a major U.S. equities market-maker | The deployment process failure left obsolete code active in production. The trading system executed millions of unintended orders across hundreds of securities in under an hour. | Trading loss in the mid-hundreds of millions, realized in under 45 minutes. Emergency rescue financing required to avoid insolvency. | Loss of independence, the firm was acquired within months. Regulatory overhaul of market-access controls industry-wide. Cited in every discussion of autonomous-system governance since. |

The hidden cost of failed AI governance

The direct settlement or write-down figures are only the visible portion. Deloitte’s analysis of cyber-incident impact concluded that direct, visible costs, regulatory fines, legal fees, incident response, and immediate remediation account for only approximately 10 percent of the total financial impact of a significant technology failure. The other 90 percent sits below the waterline: lost business, customer churn, reputational damage, diminished brand equity, impaired valuation of affected product lines, management time consumed by remediation, and the drag on future innovation. Applied to the cases above, the true cost of each incident is a multiple of what regulatory filings disclose. A regulatory settlement in the low seven figures does not capture the value destruction when the case becomes the reference point every plaintiff’s attorney cites for the next decade. A nine-figure inventory write-down does not capture the multi-billion-dollar decline in market capitalization that followed. The direct number is the shock. The indirect number is the wound.

Where GAAS and audit standards stand today

Generally Accepted Auditing Standards, issued by the AICPA Auditing Standards Board for private companies, and the parallel PCAOB standards for public companies, govern how external auditors opine on financial statements and internal control over financial reporting. These standards have not yet been meaningfully updated to address autonomous AI systems operating within audit clients. AU-C 315 requires auditors to understand the entity’s IT systems and their controls, and this requirement extends to AI. However, the traditional techniques of walkthrough, reperformance, observation, and test of controls assume a deterministic system or a human actor. Autonomous systems are neither. A system that performed within tolerance at the start of the audit period may behave differently at the end due to model retraining, modified prompts, or extended tool integrations.

The audit profession is aware of the gap. The AICPA’s Assurance Services Executive Committee has signaled interest in AI-specific attestation standards modeled on SOC 2, and the PCAOB is considering how ICFR attestation should evolve when autonomous systems execute controls. These updates are in development but have not yet been issued. Forward-looking audit firms are not waiting. They are engaging independent AI evaluation providers to supplement their attestation work, treating the evaluation as a specialized form of evidence, comparable to how actuarial specialists support audits of insurance reserves, or how valuation specialists support audits of complex financial instruments. The auditor who has access to an independent evaluation function can attest to these systems with confidence. The auditor who does not is signing opinions on systems whose behavior they cannot verify.

What assurance looks like when the agent is the actor

Traditional assurance rests on the premise that human actors operate within defined procedures and auditors test whether procedures were followed. When an autonomous system becomes the actor, assurance evolves along three dimensions:

Fit for purpose. Before deployment, leadership needs a documented, independent evaluation of the system against the specific decisions it will make and the failure modes that matter in the organization’s risk taxonomy. Not a vendor certification, a third-party examination comparable to what a financial audit provides for a general ledger.

Behaving as expected. After deployment, the organization needs periodic re-evaluation, monitoring of material drift, and on-demand audit-ready documentation. Assurance that is not refreshed has expired.

Constrained. When a system executes outside its intended boundaries, the organization needs to demonstrate the constraints in place, the boundary violations that occurred, and the remediation that followed. Google’s announcements at Next 2026, including Model Armor for prompt and response screening, the Agent Sandbox for hardened code execution, and the Agent Gateway for centralized policy enforcement, are constraint mechanisms in the platform layer. They are useful, and they will reduce certain classes of failure. They do not, by themselves, produce boundary evidence in a form that an external auditor or a regulator will accept. Boundary evidence is the difference between an incident that can be explained and one that cannot, and it requires independent attestation, not vendor self-reporting.

The systemic risks that cannot be fully solved for

Intellectual honesty requires acknowledging what governance cannot fix. Some risks in autonomous systems are not fully addressable with current frameworks: emergent behavior at the intersection of multiple agents, particularly relevant now that Google has launched its Agent-to-Agent protocol in production at 150 organizations; compounding errors across agent chains that pass individual thresholds; distributional shift at scale and speed outpacing evaluation cadence; and reward-hacking in systems optimized against proxies that imperfectly capture intent. These are research problems as much as governance problems, and they will remain open even as frameworks mature. The goal of independent evaluation is not to eliminate these risks but to make them visible, bounded, and attributable to a specific assessment at a specific point in time, so leadership can demonstrate the risks were known and managed rather than ignored. A board that says everything is fine has failed its advisors. A board that knows where the limits of current assurance lie, and what compensating controls are in place, is a board that can discharge its fiduciary duties with clear conscience.

Five questions boards should ask before the next capital commitment

For any material AI deployment, including the platform-level decisions that Next 2026 has now placed squarely on the board agenda, leadership should be prepared to respond with documentation rather than assertions:

- What independent evaluation has this system undergone against the specific risks it is intended to mitigate or exacerbate? Not a generic benchmark, not a vendor whitepaper, but an examination against the failure modes that matter in this organization’s risk taxonomy.

- What evidence can we produce to a regulator, auditor, or board committee on demand? If assembling the artifacts requires weeks, the evidence posture is not adequate to accept the risk.

- What changes since the last evaluation would invalidate the prior conclusion, and what is our cadence for refreshing it? Quarterly is reasonable for high-consequence systems. An annual cycle may be acceptable for lower-stakes deployments. Never is not acceptable. Given that Google itself is now releasing Gemini model upgrades on a quarterly cadence, the change frequency is no longer in the customer’s control.

- Who signs off, and on what basis? Sign-off should rest with an executive who can articulate the evaluation, residual risk, and monitoring plan. Sign-off delegated to the team that built the system is not an independent sign-off.

- What independent assurance function do we engage in, and what evidence can it provide to our audit committee and external auditors? The capability to provide third-party evaluation evidence is becoming a board-level procurement question rather than an IT-level one. The Agent Gallery announced at Next 2026 already lists vetted third-party agents from Accenture, Deloitte, Salesforce, ServiceNow, S&P Global, Workday, and others. The procurement of agents is now a procurement of actors. It deserves the same rigor as the hiring of senior employees.

The opportunity and the responsibility

The platforms being announced this week, the Gemini Enterprise Agent Platform, the Agentic Data Cloud, Agentic Defense, and the Agentic Taskforce running across Customer Experience and Workspace, represent real economic potential. Autonomous systems will compress cycle times, reduce operational friction, and unlock categories of work previously unaddressable. Finance leaders and boards approaching this moment with discipline will capture that potential. Those approaching it without governance will discover, often in public, that the absence of assurance is itself a material risk. COSO provides a framework familiar to audit committees, internal auditors, and external examiners. Extending that framework to the autonomous layer is not a new discipline. It is the same discipline applied to a new class of actors. Organizations that do this work now will find that their ability to deploy autonomous systems responsibly becomes a source of competitive advantage, not a tax on innovation. Those that do not will find the conversation happens anyway, but on terms they do not control.

• • •

For further discussion on AI governance, assurance frameworks, and board-level oversight of autonomous systems, contact Hindol Datta at hindol@trustmodel.ai.

AI-assisted insights, supplemented by 25 years of finance leadership experience.